The delay of Siri 2.0 isn’t just a product update pushed back. It’s a case study in what happens when testing gaps surface too late in AI development.

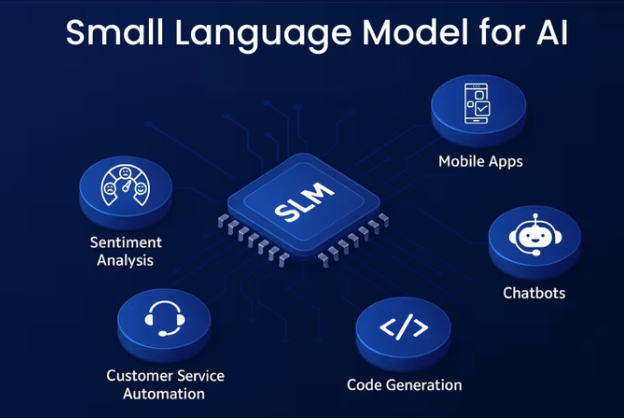

Reports suggest performance instability, routing confusion between AI models, and conversational accuracy issues were discovered during internal reviews. None of these are cosmetic defects. They are architectural weaknesses.

Performance testing likely didn’t simulate real-world constraints early enough — device variability, network latency, background processes. Integration logic between ecosystems wasn’t stress-tested across every scenario. And conversational validation didn’t sufficiently benchmark multi-turn context retention.

This highlights a major misconception in AI product development: testing is not a final checkpoint. It is a design input.

AI agents operate across unpredictable environments. They interpret ambiguous intent, switch between models, and execute actions that directly impact user trust. When validation happens late, teams aren’t fixing bugs — they’re rethinking system architecture.

If an organization like Apple can face a six-month delay due to testing blind spots, smaller enterprises face even higher risk exposure.

The lesson isn’t “test more.”

It’s “design for testability.”

Performance SLAs should be defined before architecture lock. Integration contracts should be validated continuously. AI accuracy should be measured against real user tasks — not generic benchmarks.

Innovation moves fast. Trust breaks faster.

#AITesting #QualityEngineering #AIAgents #PerformanceTesting #SoftwareEngineering #TechLeadership

Write a comment ...